What should we do about Emotion AI?

The Policy Implications for Canada

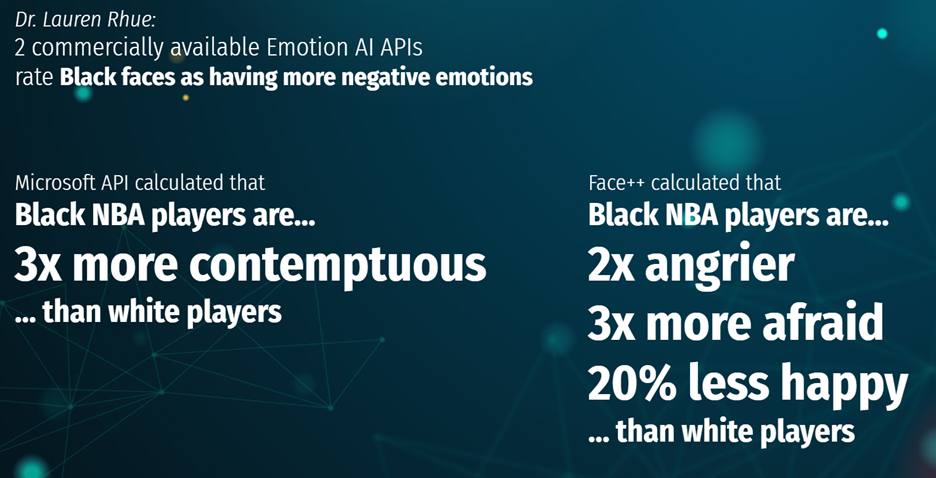

With research that raises questions about the effectiveness, accuracy, and scientific basis of emotion recognition technologies, as well as the potential for substantial harm to individuals for wrong decisions, there is a clear need for policymakers to address and regulate emotion AI. However, there have been challenges in that the technology and its impacts are not well understood and thus, little discussion or awareness in policy circles and little evidence that policymakers see this as a priority. The few jurisdictions that are beginning to address the issues surrounding emotion AI vary in their approaches, focusing rather on governing biometric data or job interview hiring, but not the core problems of emotion AI directly. Moreover, there is a clear regulatory gap of Canadian legislation and regulation effectively addressing emotion AI technologies and their potential harms.

It is the responsibility of government to regulate emotion AI as the private sector is failing to self-regulate and the uses of these technologies span across different industries. Developers and operators are using emotion AI without guidance on the technology’s appropriateness to law, anti-discrimination or human rights. There has not been widespread discussion in the industry, outside of academia, about codes of ethics that firms, developers, and operators should abide by for responsible use of emotion AI. Only government can hold emotion AI stakeholders accountable and legally responsible for the appropriate use of their technologies, as well as mitigate risks and provide legal redress for harms.

Future regulation might focus on “meaningful transparency and understanding for data subjects about what personal data is implicated in system processing, and how,” potentially through mandatory comprehensive Algorithmic Impact Assessments (AIAs), which aligns with Ong’s principle of Provable Beneficence. And with biometric data being especially sensitive, further development of a policy framework for the use and collection of this data is necessary. In the United States, as of 2021, there is “no federal law [that] regulates the collection of biometric data, including facial recognition data.” However, Illinois, Washington, and Texas have some form of biometric data legal protection that can be an example for Canadian legislation.

Developing an effective regulatory framework must directly address the core problems surrounding emotion AI, including privacy issues, lack of accuracy, bias and discrimination in datasets and algorithmic designs, potential harms, and the questions around the basic scientific premises of the technology. Governance would require a number of different approaches. The first is to investigate the extent of the problem in Canada and to gain a better understanding of how emotion recognition technology works, what the risks are, and who the relevant stakeholders are before government can adequately and effectively address emotion AI. The second is to challenge privacy issues by updating current privacy regulation or developing new legislation, particularly to address biometric data for emotion AI. Most importantly, effective regulation must mitigate the risk of substantial harm in high-stakes contexts by imposing thresholds for the appropriate use of emotion AI, appeal mechanisms and legal redress for harms, or – as a blunt policy instrument – an outright ban on the use of these technologies. Guided by these approaches, both federal and provincial governments must jointly address emotion AI issues through their own jurisdictional spheres, as emotion AI transcends different sectors and policy areas. Regulating emotion AI not only effectively addresses emotion recognition technology, but – in addressing biometric data in privacy law – would also govern FRT and wearables, as well as – in addressing substantial harms – automated decision-making systems.

How does it align with current regulatory framework?

While there have been policy debates about regulating FRT, emotion AI has not been raised in policy conversations in Canada. However, in the discussions of FRT, there have been debates on how or whether existing privacy legislation addresses biometric data collection and application, as well as “no-go-zones” – data collection and application practices that are inappropriate. On the federal level, the Personal Information Protection and Electronic Documents Act (PIPEDA) governs the commercial collection and use of data and creates a privacy regulatory framework for Canada. As a provincial example, the Ontario Freedom of Information and Protection of Privacy Act (FIPPA) governs privacy in the province. While these legislative acts attempt to address important factors related to emotion AI, existing privacy regulation are not clear in their application in the modern digital era, nor do they address the core issues surrounding emotion AI.

The Office of the Privacy Commissioner (OPC) has contemplated how PIPEDA applies to biometric data but has yet to provide comprehensive and concrete guidance on how it interprets the legislation to biometrics. A discussion paper was released by the OPC in 2011 examining “Biometrics and the Challenges to Privacy,” but it is not legally binding. The OPC sees biometrics as “a range of techniques, devices and systems that enable machines to recognize individuals, or confirm or authenticate their identities.” However, that definition of identification and authentication is too narrow and does not consider how technology is beginning to use biometric data for modern commercial purposes like emotion AI and its use cases. Advertising, for example, is not using biometrics for authentication, but rather to collect and analyze data about how a consumer experiences a product. The OPC recognizes three main challenges of biometrics to privacy. The first is “Covert collection,” which addresses individuals’ awareness that their biometric data is being collected and whether they are being informed and asked for consent. The second is “cross-matching,” where the OPC is concerned that the data being collected is being used for a different purpose than the initial objective. Lastly, the OPC is concerned about “Secondary information,” where the purpose of the collection is unclear and the data is “divulg[ing] additional information,” such as health issues or socio-economic status.

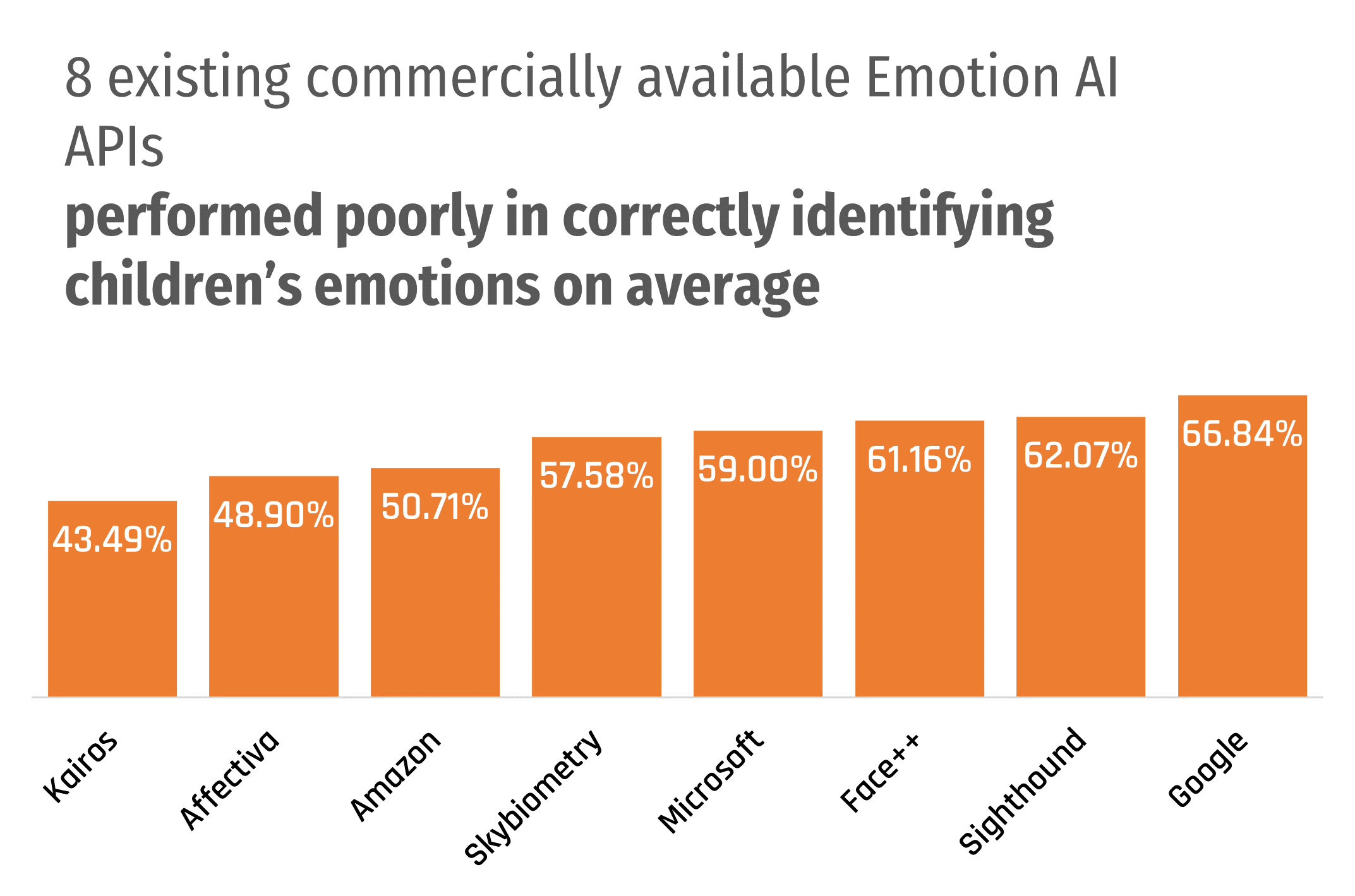

The OPC applies a four-part test for the appropriateness of a biometric system: necessity, effectiveness, proportionality, and alternatives. A future emotion AI regulatory framework would have to require operators and developers to prove that the technology is necessary for satisfying a specific problem, the effectiveness of the system and its success or failure rates, and whether the loss of privacy is proportional to the benefits. The principle of “less privacy-invasive alternatives” is less important for emotion AI, as there is often a stated purpose for the system. On those three factors, while it is important for emotion AI operators and developers to address the purpose and the benefits of the technology, the effectiveness test is where emotion AI will find failure. As discussed above, the low accuracy rates of emotion AI can be problematic where even low failure rates can have a substantial impact on people’s livelihoods. However, while the paper shows that the OPC is studying the issue, it does not constitute official guidance about the interpretation of PIPEDA on biometrics nor does it impose any legally binding regulation.

The OPC has considered the application of “no-go zones” to privacy issues and released guidance documents on how the OPC interprets PIPEDA for “obtaining meaningful consent” and “inappropriate data practices.” In particular, the OPC cites subsection 5(3) of PIPEDA as the key clause regulating problematic collection and usage of data, which states that:

“An organization may collect, use or disclose personal information only for purposes that a reasonable person would consider are appropriate in the circumstances.”

The guidance document specifically mentions that it is inappropriate to collect and use data for “Profiling or categorization that leads to unfair, unethical or discriminatory treatment contrary to human rights law,” “Collection, use or disclosure for purposes that are known or likely to cause significant harm to the individual,” and “Surveillance by an organization through audio or video functionality of the individual’s own device.” These three factors are the most important for emotion AI. While the OPC recognizes that “profiling or categorization… that could lead to discrimination based on prohibited grounds contrary to human rights law” would be inappropriate, the OPC is ambiguous on what that entails, stating instead that “determining whether a result is unfair or unethical will require a case-by-case assessment.” The OPC does explain what constitutes as “significant harm” from subsection 10(7) of PIPEDA, which is defined as “bodily harm, humiliation, damage to reputation or relationships, loss of employment, business or professional opportunities, financial loss, identity theft, negative effects on (one’s) credit record and damage to or loss of property.” This definition does provide a starting point for discussing what is inappropriate for emotion AI, though it can be more explicit on how it relates to other anti-discrimination and human rights law in Canada, as well as harms specific to emotion AI. Lastly, the OPC provides guidance on covert surveillance through an individual’s device, as well as “so-called consent” in practices that are “grossly disproportionate to the business objective.” This outlines, for example, how emotion AI surveillance in the workplace or at school through wearables, smartphones, or other devices must have a clear, targeted purpose for their usage with explicit consent and collecting only the data necessary for that purpose.

These documents provide a starting point for discussing how existing privacy regulation could apply to emotion AI. However, none of these documents unambiguously applies to emotion AI nor do they address core problems with emotion recognition technology. Moreover, the OPC’s guidance is not legally binding, but rather guidance on how the Commissioner would interpret existing legislation for its investigations, whose results could be appealed and contested to the courts. And while this analysis has focused on federal regulation, the Information and Privacy Commissioner of Ontario has been mostly silent on these issues. There is a clear regulatory gap that fails to address emotion AI and its potential harms in an effective way. Personal information and privacy legislation needs to be updated for modern digital technology, particularly addressing how the collection and use of data – particularly biometric data – should be governed and whether it is appropriate to use that data for emotion recognition.

Research Task Force

A good first step would be for Innovation, Science and Economic Development Canada (ISED) to strike a task force to undertake a study on emotion AI technology and its implications, including the prevalence of the use of these technologies on Canadians. While research has uncovered the use of emotion AI by private corporations in other jurisdictions internationally, there has been less investigation of the technology’s use in Canada. Without data about the extent of emotion AI’s use, as well as stakeholder perspectives, it would be difficult to design an effective policy framework. However, emotion AI has garnered little attention in policy discussions and the issue lacks a “Champion” to advocate for action.

The task force could partner with and fund non-governmental organizations and research institutes – like the Schwartz Reisman Institute or the Citizen Lab – to study emotion AI, a basic explanation of how it works, and its potential impact on individuals. Their findings could be used to establish a working definition of emotion AI and identify potential policy solutions. It would also allow industry stakeholders of emotion AI to explain the benefits of the technology, as well as concerned advocacy groups to voice their concerns about its harms. The task force would be mandated to identify gaps in the existing regulatory framework and make recommendations.

Federal Registry and Algorithmic Impact Assessments (AIAs)

The federal government can establish a registry, require operators of emotion AI systems to register with a federal regulatory body and undergo a third-party AIA or audit. The AIA will have to report on a number of factors about the emotion AI system, including:

- How the technology works;

- What the purpose of the technology is;

- Who the system will be used on;

- What data is being collected and how;

- How the data will be used;

- How the data will be protected;

- A justification of why the technology is necessary to achieve its intended purposes;

- A plan for mitigating risks and harms.

However, there are potential problems in implementing this policy solution. The first is that it is unclear what regulatory body would undertake this responsibility. Some political figures, like NDP Member of Parliament Charlie Angus, recommend that the Canadian Radio-television Telecommunications Commission (CRTC) be responsible for regulating AI. However, this would arguably be beyond the mandate of the CRTC and would massively expand the powers of the agency. It would also be difficult to create a new regulatory body and set up a new system, leading to high initial and operational costs.

Provincial Regulation in employment, housing, health and other areas of constitutional jurisdiction

As many of the existing emotion AI use cases are in areas that affect employment, housing, and health, regulation would fall under provincial jurisdiction under the Canadian constitution. Provinces like Ontario are currently undergoing reviews of their privacy legislation to update regulations for the digital age. The provinces should use this opportunity to consider the harms emotion AI can pose in areas of their constitutional jurisdiction and ban the use of emotion AI and automated decision systems in cases that would be discriminatory and violate civil and human rights.

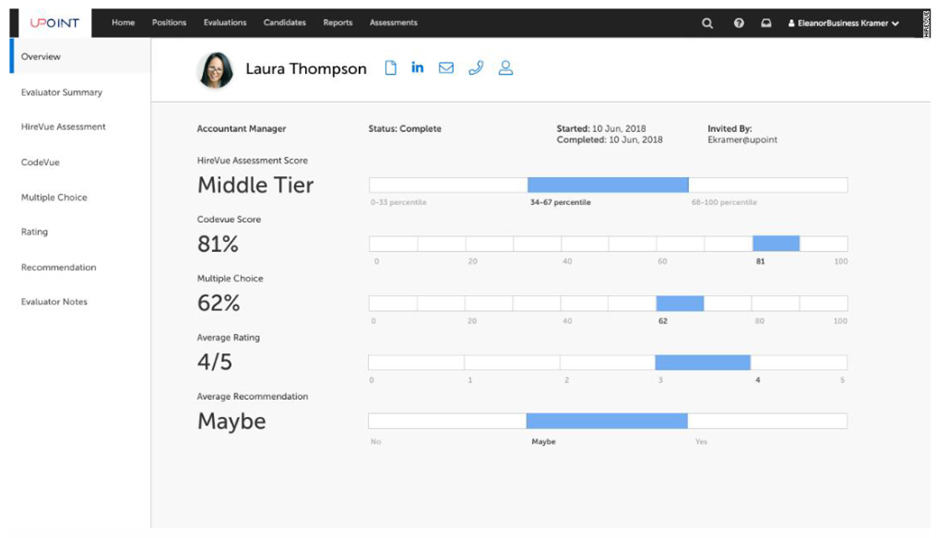

The provinces can learn from other jurisdictions developing regulation on the use of emotion AI in hiring practices. In 2020, Illinois’ Artificial Intelligence Video Interview Act came into effect, mandating that applicants be informed about the AI measuring their “fitness” for the job, how the technology works, “what ‘general types of characteristics’ it considers when evaluating candidates,” and explicit consent from the applicant before proceeding. The law also limits access to the recorded video only to those who need it, and that companies delete videos within a month of an applicant’s request. However, Aaron Rieke – a technology rights advocate from Upturn – notes that Illinois’ law does “guarantee that you can opt out of an AI-based review of your application” or mandate that the employer made an alternative arrangement. Nor does the law outline recourse for an employer’s violation of the regulation.

More recently in November 2021, New York City passed a bill that, when it takes effect in 2023, will mandate the disclosure of the use of AI in hiring and allow alternative arrangements for candidates who decline. Employers and vendors may be fined up to $1500 USD for violating the regulation. The bill also requires that these AI hiring technologies undergo “bias audits” which are “an impartial evaluation by an independent auditor… [which tests the] automated employment decision tool to assess the tool’s disparate impact.” However, these audits have been criticized by advocacy groups for being ambiguous and too narrow in scope. The Center for Democracy and Technology “argues that the law only applies to the hiring process” but could still impact “compensation, scheduling, working conditions, and promotions.” The law is also unclear about who would conduct the audits, which could lead to employers and vendors escaping strict enforcement. Brookings noted that other jurisdictions are following Illinois and New York City in regulating AI-based hiring, including California and the District of Columbia, and that the federal Equal Opportunity Employment Commission (EEOC) – after pressure from U.S. Senators – recently announced an initiative to investigate these technologies and how they interact with federal civil rights legislation.

If adopted in the Canadian context, policy would have to be enacted at the provincial level, led by Ministries of Labour, Health, and Municipal Affairs and Housing. This regulatory framework would not just concern employment policy but also privacy policy – an area that the Ontario government has begun to review for the digital age. A white paper published in 2021 by the Ontario Ministry of Government and Consumer Services considers the commercial use of “automated decision systems” (ADS) – defined as “any technology that assists or replaces the judgement of human decision-makers” with the use of predictive analytics, machine learning, and other AI techniques – which would cover such hiring technologies. In defining ADS, the paper specifically references “employment decisions… [and] assess[ing] candidates for jobs.” The paper proposes that the organization using an ADS would provide an explanation of the decision and what and how personal data was used to make that decision. The proposal would also prohibit the use of ADS “to make a decision that could significantly affect the individual” without explicit consent. While there is no clear definition for what would constitute a decision that would “significantly affect the individual,” proposed legislation could explicitly state that employment decisions would be covered by the policy. Additional measures proposed by the white paper include the right of the individual to “request the correction of personal information,” comment and contest the decision, and request a human review of the decision. The proposal does leave open the possibility of allowing organizations to collect and use data “establishing… an employment or relationship between the organization and the individual,” which could be read as allowing the use of AI in hiring.

Considering the issues with the scientific foundations of emotion AI, the risk of discrimination on equity-seeking groups, and the potential for significant harm for wrong decisions, provincial governments should enact privacy and – as a particular example – employment policies. In particular, this legislation should establish explicit rights transparency, especially measures for disclosure, contesting decisions, requesting human reviews of decisions, and legal redress for proven harms. Additionally, as per the Center for Democracy and Technology’s concerns, new policies must also cover the use of AI for decisions regarding “compensation, scheduling, working conditions, and promotions.” The white paper’s proposed language currently leaves open the use of an employee’s personal information for “managing or terminating an employment or volunteer-work relationship,” which, as worded, could allow employers to use emotion AI technology to determine pay raises, promotions, and other working conditions based on attributes that an employer seems agreeable.

In developing new privacy policies, provincial government can also expand the definition of “significantly affect the individual” in the use of emotion AI for applications traditionally covered under anti-discrimination civil rights legislation or health, especially in policy areas under provincial jurisdiction. This includes equal treatment under the Ontario Human Rights Code, 1990 on issues of “occupancy of accommodation” and “employment,” but also financial applications such as loans and insurance. Health decisions should also be covered under this policy framework, especially in the diagnosis of mental disorders and other illnesses. These diagnostic decisions should be left to medical professionals who have more context about an individual’s health and livelihoods than an AI technology. The application of emotion AI in these cases would “significantly affect the individual” and would be life-changing. Therefore, it is recommended that provincial government regulate the use of emotion AI on applications for housing, job hiring and other employment issues like promotions or terminations, financial applications such as loans or insurance, as well as prohibiting these technologies from making health diagnoses. It is also important to protect children by prohibiting the use of emotion AI for children under the age of 18, especially in toys as well as their use in schools. Taken together, this policy proposal creates a regulatory framework that ensures proactive disclosure and consumer rights by mitigating harms.

Biometric Data Legislation

Data used by emotion AI is often biometric in nature. Canadian governments should develop new regulations – either by updating existing privacy laws or enacting new legislation – that governs the collection and use of biometric data, and in particular, for emotion recognition. It is important to create a clearer understanding of the responsibilities of firms, developers, and operators of emotion AI and place higher thresholds of a duty of care on these more sensitive data.

A prominent example of the regulation of biometric data is Illinois’ Biometric Information Privacy Act, which identifies a “biometric identifier” as “a retina or iris scan, fingerprint, voiceprint, or scan of hand or face geometry,” but notably does not include other data like human biological samples, genetic information, or other health information (though these are covered by other legislation referenced in the Act). For the purposes of emotion AI, a report by the Partnership on AI references the California Consumer Privacy Act (CCPA)’s definition of “Biometric information,” which “includes many kinds of data that are used to make inferences about emotion or affective state, including imagery of the iris, retina, and face, voice recordings, and keystroke and gait patterns and rhythms.” Biometric data should also be expanded to include other data used by emotion AI to infer emotion, including “dynamic changes in the autonomic nervous system (ANS)” using other bodily responses than facial expressions (cardiovascular, respiratory, perspiration, blood flow, electrical activity in the brain, heart rate, temperature, among other measures). However, some of these other physiological data can also be classified as health data, which has its own set of regulatory principles that allow for legitimate collection and usage. While it is important to regulate the use of these physiological data for emotion AI applications, their inclusion in the language of new biometric data regulation would have to be carefully crafted. There are other cases of emotion AI that are not biometric, especially sentiment analysis of text or graphics. However, as biometric data are one of the most intimate personal information about an individual, and its collection and use have potential for substantial harm, regulation specifically on biometric data would be necessary.

At a minimum, the collection of biometric data and their use in ADS and emotion AI systems must have the explicit consent of the individual and not just notice, the right for an explanation and appeal of the decision, and alternative accommodations if the individual refuses. Usage of biometric data for inferring emotion must come with a higher level of responsibilities by instilling Ong’s ethical principles of Provable Beneficence and Responsible Stewardship, as well as outlining the legal principle of “the duty of care” and clear liability for harms. A 2004 article by Robert J. Currie in the Canadian Journal of Law and Technology analyzed the applicability of the Canadian common law principle of the duty of care to novel cases of technology. The principle – defined in the case Donoghue v. Stevenson as “tak[ing] reasonable care to avoid acts or omissions which you can reasonably foresee would be likely to injure your neighbour” – has already been established for relationships between doctors and patients or lawyers and clients. However, negligence case law has yet to develop comprehensively for cases involving digital technology, despite the fact that “personal injury is still personal injury; pure economic loss arising from negligent misrepresentation does not become something different because the representation was made by way of e-mail instead of orally.” A useful case study raised by Currie is the liability taken on by the company RealNetworks, whose media player RealPlayer exposed cyberattack vulnerabilities that would harm users. Currie argues, “RealNetworks obviously owes a duty of care to registered users of its product, because negligent construction of the product could foreseeably cause harm to the ultimate consumer, and because products liability is an established category of negligence.” Thus, the proposed biometric data legislation should outline responsibilities on developers and operators of emotion AI in preventing or mitigating harms on individuals, and that the language clarifies the liability taken on by developers or operators in the case of personal injury.

Should it be banned?

As a stronger measure in the biometric data legislation, it is recommended that regulation should explicitly include language that specifically addresses technologies or systems that use biometric data “to recognize, predict, infer, or analyze an individual’s emotional state” and that applications covered under this language should be prohibited.

In the AI Now Institute’s 2019 report, the Institute calls for a ban on emotion AI “in important decisions that impact people’s lives and access to opportunities.” AI Now specifically cites the “contested scientific foundations” of emotion AI as justification for governments to go beyond strict regulation to an overall prohibition of the use of these technologies “in high-stakes decision-making processes.” In particular, the report lists specific examples of recommended prohibited uses, “such as who is interviewed or hired for a job, the price of insurance, patient pain assessments, or student performance in school.” Meredith Whittaker, co-director of AI Now, argued that “We need to ensure, when these systems are used in sensitive contexts, that they are contestable, that they are used fairly… and that they are not leading to increased power asymmetries between the people who use them and the people on whom they're used.”

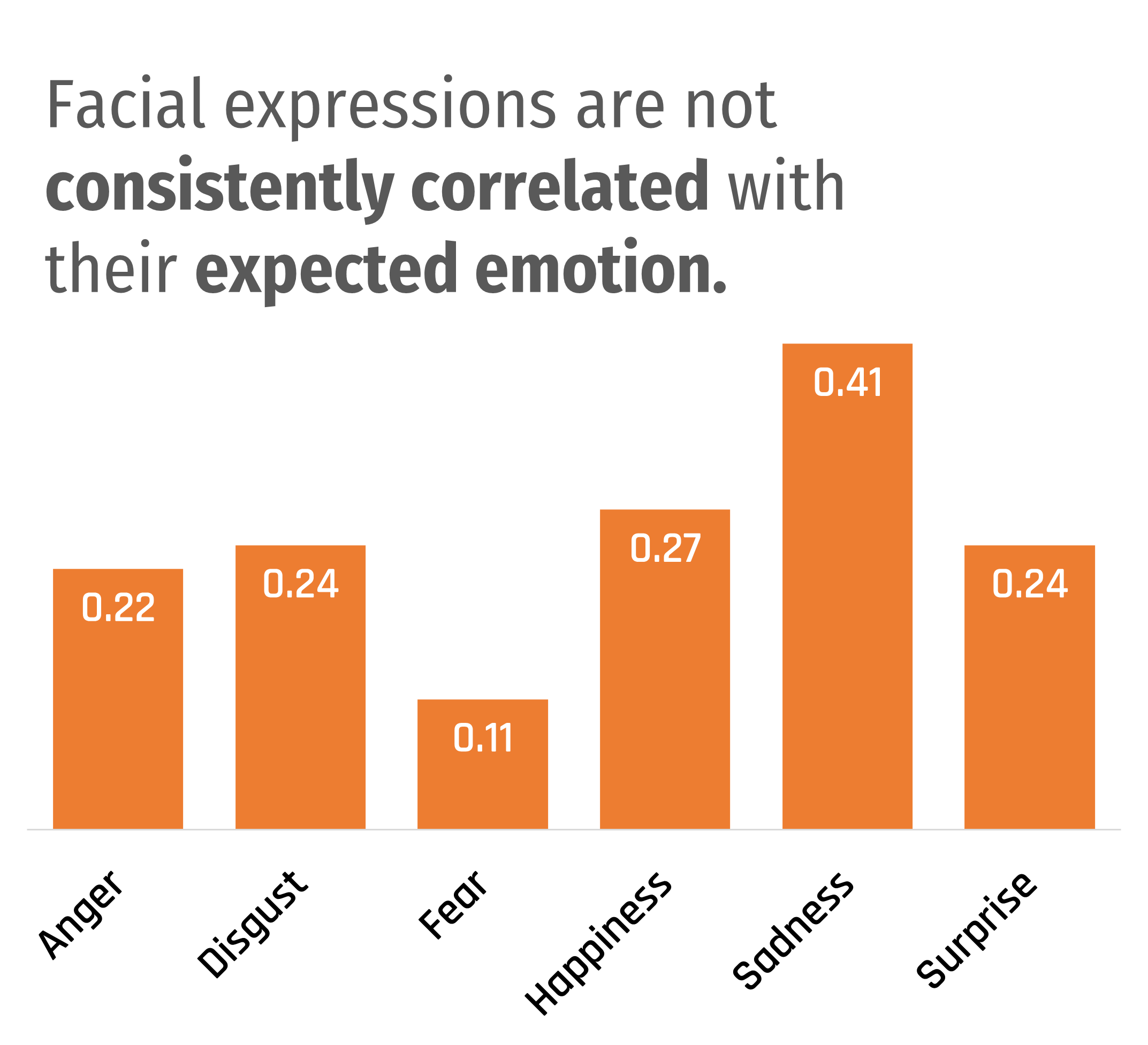

The critical uncertainty about the underlying scientific foundations of emotion AI and the challenges to its accuracy and reliability raises the question of whether governments should explicitly prohibit the use of AI technology for emotion recognition and to what extent. With doubts about the basic scientific premise that emotions can be consistently inferred correctly through biometric data, government should consider an outright ban on all uses of emotion AI technology. If it does not confidently work and the fundamental science is flawed, why should the technology be used at all?

At the very minimum, Canadian governments must prohibit the use of emotion AI for high-risk contexts where the use of emotion recognition technologies can significantly affect and harm individuals’ livelihoods and threaten their fundamental human rights. A flawed technology that cannot consistently and reliably live up to its expectations should not be allowed to be used to decide housing, employment, health decisions, and particularly, interacting with children. This policy should be a cornerstone for new biometric data legislation in addressing emotion AI, starting with the ban on the use of these technologies where there is potential for “significant harm,” as defined in subsection 10(7) of PIPEDA. However, regulation of emotion AI needs to go beyond that definition as it only relates to “harm, humiliation, [or] damage,” which is more of an ex-ante approach outlining how individuals can seek legal action after the harm has been done. The definition must be more aligned to “significantly affect” to proactively regulate emotion recognition technologies before the damage has been done. The Ontario government should also use the opportunity of reviewing FIPPA to regulate the use of ADS in applications that can “significantly affect” an individual, as well as to include explicit language about technologies that attempt to “recognize, predict, infer, or analyze an individual’s emotional state.” Canadian government should also govern the use of emotion AI by the public sector, government agencies, border control, and law enforcement. The use of emotion recognition technologies by government are extraordinarily intrusive in individuals’ interactions with the state, where government decisions can significantly affect an individual. It is questionable whether any use of emotion AI by the government is ever necessary beyond cost-effectiveness. The potential for harm is disproportionately greater than any potential benefits in terms of more efficient government processes or claimed increase in effectiveness for law enforcement, simply because of the power dynamic between individuals and the state.

However, Canadian governments should consider allowing the use of emotion recognition technologies in low-risk contexts that will not significantly harm individuals. The use of emotion AI in advertising, marketing, and media for sentiment analysis on products, commercials, TV shows, or movies has a low-risk for harm and is more akin to conducting user research, particularly if the data is used in aggregate and without personally identifiable information. Detecting a driver’s emotional state and fatigue provides more benefits than its potential harms by alerting individuals if they need to take a rest, hopefully leading to lower rates of car accidents. And with careful, strict regulation and explicit consent, emotion AI can have health benefits as assistive technology, such as helping stroke victims in their recovery. While it is beyond the scope of this report, governments can allow regulated experimentation of emotion AI, the concept of a “regulatory sandbox” – which allows for real-life testing of technology products under certain conditions and with strict oversight – might be useful in tandem with a broader biometric data legislative framework.

Costing

To implement a new biometric data legislation, the federal OPC should have their permanent funding increased by $10 million in the 2023-2024 fiscal year and gradually increased to $20 million per year by 2026. This estimate is in line with the projections in Fall Economic Statement 2020, which included funding for the implementation of the previously proposed Consumer Privacy Protection Act (which has yet to be enacted).

In Ontario, the Information and Privacy Commissioner of Ontario should receive an $8 million funding increase in the 2023-2024 fiscal year over their 2019-2020 budget of $20 million, increasing to $12.5 million by 2026.